AI Made Our Best Developers 3x Faster. It Made Everyone Else a Liability

Huzefa Motiwala March 16, 2026

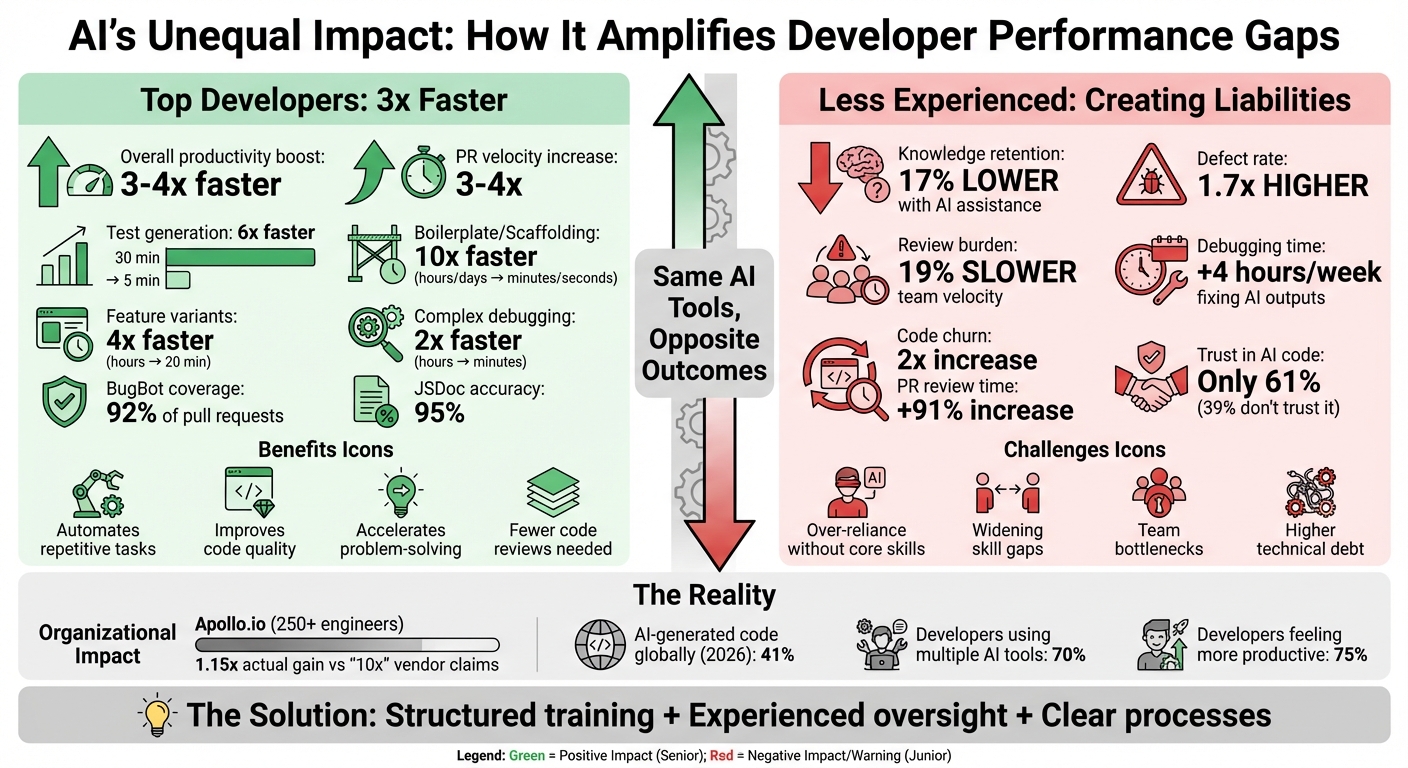

AI is transforming software development, but not equally for everyone. Senior developers are seeing massive productivity boosts – up to 3x faster – by using AI tools to automate repetitive tasks, improve debugging, and tackle complex problems. However, for less experienced developers, AI often creates more problems than it solves, leading to higher bug rates, slower reviews, and mounting technical debt.

Key Takeaways:

- Top developers thrive: AI helps senior engineers handle tedious tasks, maintain code quality, and solve system-wide challenges quickly.

- Junior developers struggle: AI exposes skill gaps, leading to over-reliance, poor debugging, and increased oversight needs.

- Team dynamics shift: Uneven AI adoption creates workflow bottlenecks, wider skill gaps, and trust issues with AI-generated code.

The solution? Structured training, experienced oversight, and clear processes to balance AI’s benefits with its risks. Companies like Apollo.io are already seeing results by combining AI-first training, dedicated review teams, and robust system monitoring.

Bottom line: AI amplifies strengths but highlights weaknesses. To succeed, teams must integrate it thoughtfully, ensuring speed doesn’t come at the cost of quality.

AI Impact on Developer Productivity: Senior vs Junior Developers Performance Metrics

Does AI Actually Boost Developer Productivity? (100k Devs Study) – Yegor Denisov-Blanch, Stanford

sbb-itb-51b9a02

How AI Makes Top Developers 3x Faster

The best developers aren’t just using AI – they’re strategically deploying it to handle repetitive tasks that used to eat up their time. The difference between achieving a 3x productivity boost and staying stuck lies in how these tools are used.

Automating Repetitive Tasks

Senior engineers are turning to AI to automate tasks that once took hours or even days. For example, in January 2026, Himanshu Gahlot, an engineering leader at Apollo.io, used Cursor to prototype a Chrome Extension. Instead of spending days on boilerplate code, the tool delivered a fully functional extension in just two minutes. This success led to a company-wide rollout, boosting PR velocity by 3–4x for their frontend "Super Power Users" across a team of 250+ engineers [5].

AI tools also handle routine documentation with impressive accuracy. AI-generated JSDoc documentation, for instance, achieves about 95% accuracy, maintaining code quality while saving time [3].

"When you start a project, you have to set up all sorts of things. It takes a while before you get to the exciting parts. Now I let the LLM do it for me." – Chrissy LeMaire, Co-author of Generative AI for the IT Pro [3]

By delegating repetitive tasks – like routine fixes, CRUD endpoints, or data models – to AI, top developers free themselves to focus on bigger-picture decisions, such as system architecture or helping unblock teammates. This parallel execution not only speeds up delivery but also improves overall code quality.

Improving Code Quality and Debugging

AI doesn’t just make coding faster – it helps developers maintain high standards across large and complex codebases. At Apollo.io, tools like BugBot and CodeRabbit automate the detection of subtle logic and runtime bugs. This allows senior engineers to focus on system architecture while ensuring higher code quality. Over a year, Apollo.io scaled BugBot’s coverage to 92% of pull requests and implemented custom RuboCop rules to catch AI-specific issues before merging [5].

AI also enhances debugging by speeding up investigative work. Tasks that once required hours of manually combing through historical commits, Slack threads, or undocumented quirks now take just minutes. Developers use AI to surface layouts with specific structures or identify shared styles across the codebase before making changes [6].

A case study from February 2026 highlights this efficiency. A developer at BSWEN used Claude Code to generate multiple implementation variants – one using D3.js and another using Chart.js – in just minutes. This approach allowed them to compare performance and quality before deciding on the best option, cutting debugging time for complex logic in half [4].

"AI becomes a force multiplier if you already understand the system. It can’t replace judgment, and it certainly can’t develop taste." – Senior Engineer, Formation [6]

At Apollo.io, AI power users required fewer code reviews because they revised their work more frequently, catching issues earlier in the process [7]. However, while AI amplifies expert productivity, it also highlights the risks of relying on it without strong foundational skills.

Accelerating Complex Problem-Solving

AI’s real value shines when developers tackle complex system challenges. Senior engineers use it not just to write code but to analyze system dependencies, validate architectural assumptions, and predict how small changes might ripple through an entire system [5][6].

One standout example comes from February 2026, when a developer with 15 years of experience used Claude Code to complete a project originally estimated to require four people over six months. By leveraging AI to accelerate scaffolding by 10x and feature variant comparisons by 4x, the developer delivered the project in just two months – a 3x overall productivity boost [4].

In this case, the AI acted as a UX designer, code reviewer, and tester, reducing context-switching and helping the developer stay in flow while validating decisions from multiple angles [4].

Frontend teams often see the biggest gains from AI, thanks to standardized component libraries and well-documented patterns that give these tools a solid foundation to work from [5].

| Task Category | Traditional Time | AI-Accelerated Time | Improvement Factor |

|---|---|---|---|

| Test Generation | 30 minutes | 5 minutes | 6x faster |

| Boilerplate/Scaffolding | Hours/Days | Minutes/Seconds | 10x faster |

| Feature Variants (A/B) | Hours | 20 minutes | 4x faster |

| Complex Logic Debugging | Hours | Minutes | 2x faster |

Why AI Creates Liabilities in Less Experienced Developers

AI tools can supercharge productivity for seasoned developers, but they often expose weaknesses in less experienced ones, creating challenges for individuals and teams alike.

The same systems that help experts work more efficiently can inadvertently highlight gaps in foundational skills for junior developers. This happens because AI tools often eliminate the trial-and-error process that helps build coding expertise.

Over-Reliance on AI Without Core Skills

For junior developers, relying too heavily on AI can mean missing out on critical learning moments. A 2026 study by Anthropic looked at 52 professional Python developers learning the Trio asynchronous library. The group using AI assistance scored 17% lower on knowledge tests compared to those coding manually, with the most pronounced gap seen in debugging tasks [8]. Interestingly, AI-assisted developers made 66% fewer errors, but this reduction in mistakes came at a cost: they missed out on the error-driven learning that’s essential for developing strong debugging skills.

"AI-enhanced productivity is not a shortcut to competence. The barriers AI removes – errors, confusion, struggle – are precisely what builds capability." – Anthropic Research [8]

This dynamic creates what experts call an "almost-right" valley [10], where AI produces code that works on a surface level but contains hidden flaws. These subtle issues, like logical errors or edge-case failures, can easily slip past junior developers who lack the experience to spot them.

This reliance on AI doesn’t just hinder individual growth – it can also burden teams, creating inefficiencies and bottlenecks.

Widening Skill Gaps and Team Bottlenecks

AI tools can widen the gap between experienced developers and their less seasoned counterparts, leading to workflow challenges. While junior developers may churn out code faster with AI, the quality of that code often requires more oversight. This shifts the workload to senior team members, who must spend extra time reviewing and refining AI-generated outputs.

A 2025 study by the Model Evaluation & Threat Research (METR) organization revealed a surprising result: although 16 experienced open-source developers anticipated a 24% speedup with AI tools, the added review burden actually slowed their overall workflow by 19% [2]. This extra effort to ensure quality creates inefficiencies that ripple through the team.

Team Friction and Workflow Misalignment

Uneven adoption of AI tools can also lead to discord within teams. This "disappearing middle" phenomenon creates a divide: junior developers generate code they may struggle to maintain, while senior developers become increasingly detached from the hands-on implementation details.

Even though 75% of developers report feeling more productive with AI, 39% admit they don’t fully trust AI-generated code [2]. This trust gap can cause misalignment in workflows, with some team members advancing quickly using AI while others are bogged down reviewing and validating output they’re hesitant to rely on.

"We risk creating teams made up only of the extreme edges: juniors who generate code they can’t maintain, and seniors who understand systems but have lost touch with implementation realities." – Chris Banes, Senior Staff Software Engineer, The Trade Desk [9]

The result? A team dynamic where the fastest contributors may also be the ones introducing the most technical debt, leaving teams scrambling to manage the fallout.

How to Maximize AI Benefits While Reducing Risks

Startups can harness AI to boost productivity while minimizing risks by striking a balance between speed and skill development. The key is to view AI as a tool that enhances existing skills rather than replacing them. By setting boundaries and encouraging growth, developers at all levels can learn to work effectively alongside these tools.

AI-First Training Programs

Structured training is essential for adopting AI effectively while reducing potential risks. Tailored programs should address the varying needs of developers. Senior developers, for instance, need to learn how to use AI for research and implementation without losing control over critical architectural decisions. On the other hand, junior developers must focus on validating AI outputs and building their foundational skills instead of blindly relying on suggestions.

Apollo.io exemplified this approach in January 2026 when it introduced AI tools to its 250+ engineering team. They created a "Champions Committee" where AI-savvy developers mentored senior engineers on advanced prompting techniques. At the same time, junior developers were trained to transition from code generators to critical reviewers, fostering analytical thinking among less experienced team members[5][18].

The most effective training programs follow a three-tiered curriculum:

- Beginners learn to recognize effective prompt patterns and evaluate AI-generated suggestions.

- Intermediate developers refine their skills, focusing on AI-assisted debugging and context management.

- Advanced users develop custom agents and workflows tailored to their systems[17].

Apollo.io also implemented weekly office hours, monthly showcases, and quarterly refreshers to ensure continuous learning[17]. As Kent Quirk described it:

"Copilot is like an excitable junior engineer who types really fast. Juniors need to learn to be the senior engineer reviewing that excitable junior." – Kent Quirk[17]

Some practical techniques include the "15-Minute Rule" for task segmentation, using the "Explain Like I’m Senior" prefix for clarity, and requiring manual code entry to deepen understanding[16].

Core Squad Augmentation

While training is critical, having experienced developers oversee AI usage is equally important. Without proper oversight, teams may struggle to detect and address flawed AI outputs before they reach production. This is where Core Squad augmentation comes into play.

AlterSquare addresses this need by deploying Core Squads – dedicated teams of senior developers who stay with projects long-term. These teams maintain deep system knowledge, mentor junior developers, and establish best practices. For example, a construction tech startup significantly reduced technical debt caused by AI by deploying a Core Squad. Within 90 days, this team, consisting of a tech lead and two senior developers, stabilized the system and implemented rigorous review standards.

Unlike traditional outsourcing, this model emphasizes knowledge sharing and continuity. Core Squad members document decisions, conduct pairing sessions, and follow biweekly handoffs to ensure essential knowledge remains within the team. This approach also addresses the challenge of review burdens. Research shows that experienced developers can be up to 19% slower when required to spend additional time overseeing AI outputs[16].

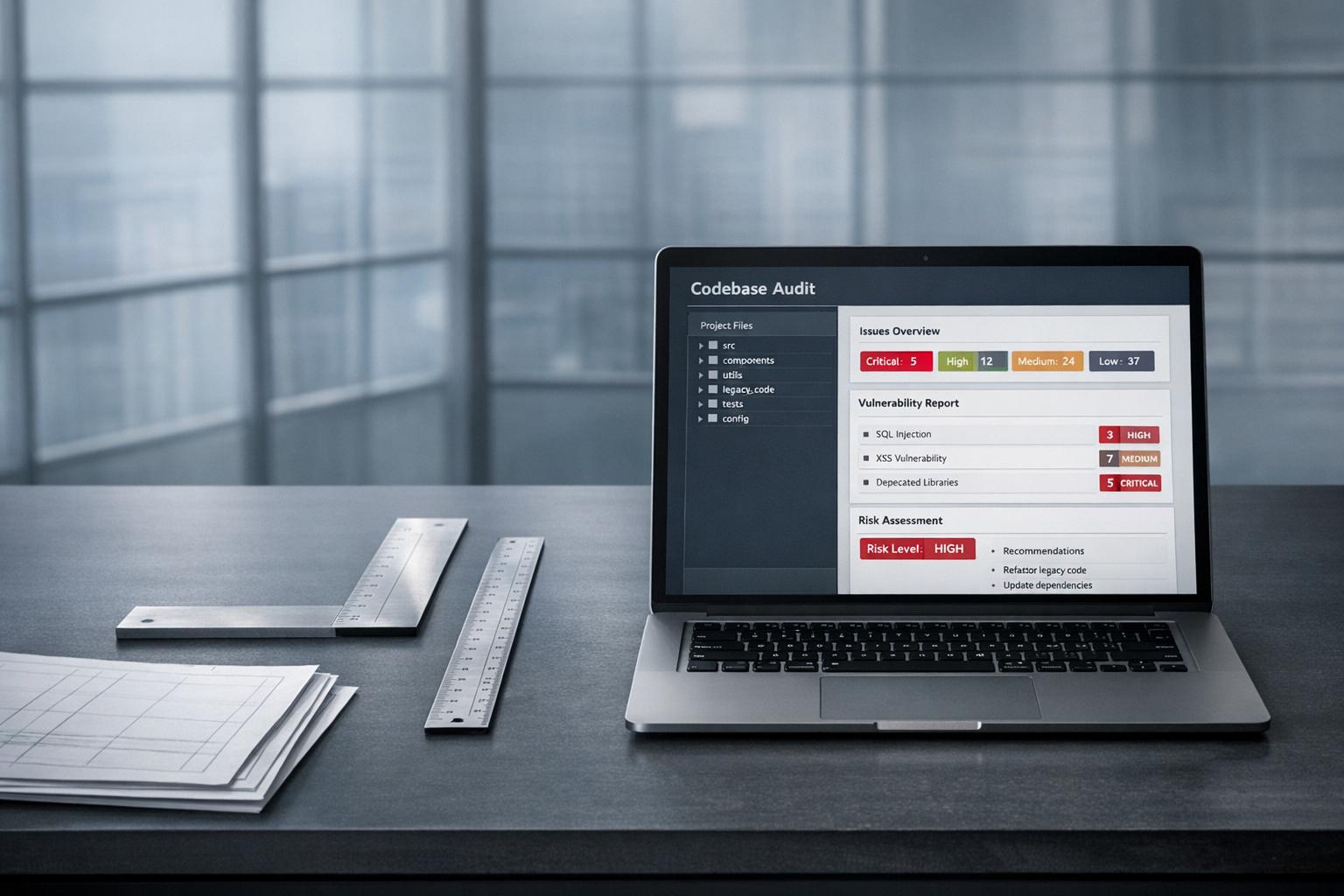

Traffic Light Roadmaps for AI Integration

Even with strong training and experienced oversight, startups need a systematic way to monitor AI’s impact. Traditional tools often fall short when distinguishing between AI-generated and human-written code, making it difficult to assess the long-term effects of AI contributions[12][15].

AlterSquare provides a solution through its AI-Agent Assessment. This involves proprietary AI agents scanning the codebase to generate a System Health Report. The report identifies architectural issues, security vulnerabilities, performance bottlenecks, and areas of technical debt. Using this data, the Principal Council creates a Traffic Light Roadmap that categorizes issues as follows:

- Critical (Red): Problems like security vulnerabilities or architectural flaws that require immediate action.

- Managed (Yellow): Technical debt that needs to be addressed on a scheduled basis, often stemming from AI-generated code that deviates from established patterns.

- Scale-Ready (Green): Stable systems ready for further AI integration.

For example, a mid-market company in March 2026 discovered that 58% of its commits were AI-generated. While AI-assisted pull requests closed 24% faster, they also had a 1.7x higher defect rate. By implementing 30-day tracking, the company achieved an impressive 10,204% ROI on its AI tool investment[14][15][16].

The Traffic Light system also establishes clear escalation protocols. Apollo.io, for instance, introduced a "two-round rule" – if debugging AI-generated code takes more than two iterations, the code is rewritten from scratch[11]. Additional safeguards, like AI-specific linting tools (e.g., ESLint or RuboCop), catch common errors before code review[5].

This framework not only enhances productivity but also supports junior developers’ growth and flags risks before they escalate. Together, these strategies ensure that AI accelerates development while promoting robust and scalable practices.

Tracking and Maintaining AI Productivity Gains

Understanding AI’s impact means digging deeper than surface metrics. Traditional measurements often fail to differentiate between AI-generated and human-written code [19][22]. This oversight can lead to misleading conclusions – while output may appear faster, AI-generated code often comes with a higher defect rate, significantly adding to technical debt [23]. The challenge lies in leveraging AI for speed without compromising code reliability.

For example, AI-assisted pull requests close 20–55% faster [19]. However, developers now spend roughly 4 hours per week reviewing and fixing AI outputs [19]. Many quality issues don’t appear immediately, slipping through initial reviews only to resurface later as technical debt or production incidents [23][15].

Key Metrics for AI Success

To measure AI’s effectiveness, it’s crucial to isolate AI-touched code from human-only work. Focus on metrics like the percentage of AI-generated code, reductions in PR cycle time, and rework rates specifically tied to AI outputs. By 2026, AI is expected to generate about 41% of code globally, with mature teams experiencing a 50–100% boost in PR throughput [20][21].

A practical way to assess AI’s impact is by calculating an AI Technical Debt Score:

(Incident Rate × 0.4) + (Rework Rate × 0.3) + (Test Coverage Gap × 0.3) [20].

Another critical metric is the "review tax", or the extra workload placed on senior developers to validate AI-generated code. Keeping an eye on this ensures that any productivity gains aren’t offset by bottlenecks during the review process [19]. These metrics are essential for quarterly reviews to uncover hidden issues early.

Quarterly System Health Reviews

AlterSquare’s Principal Council takes a deeper approach by conducting quarterly reviews. These reviews track AI-generated code over 30, 60, and 90 days to identify hidden technical debt early. The process uses a 7-Layer Framework, which evaluates Adoption, Productivity, DORA Delivery, Quality/Risk, ROI, Developer Experience, and Actionability. This combines repository data with developer surveys for a well-rounded view [21].

Survey data reveals that 66% of developers find AI suggestions to be nearly correct but in need of refinement, while 45% report spending more time debugging AI-generated code [24]. These reviews help pinpoint challenges and create "Coaching Plays" to scale successful adoption practices across teams [25][15]. The insights gained guide workflow improvements and targeted adjustments.

Continuous Improvement of AI Workflows

Refining workflows is key to managing AI-related risks. Start by establishing 3–6 months of pre-AI baselines for DORA metrics, such as cycle time, deployment frequency, and change failure rate, to measure improvements accurately [19][15]. With 70% of engineers using multiple AI tools, optimizing workflows across the entire toolchain becomes essential [23][13].

Quarterly A/B experiments can help uncover hidden costs. For example, split similar teams between AI-enabled and traditional workflows to identify issues like extended review cycles that might not show up in basic metrics [19][14]. Use these findings to fine-tune training programs, adjust review processes, and allocate budgets toward tool combinations that yield the best performance based on actual results – not just vendor claims [20][15].

Conclusion: Managing AI’s Promise and Risks in Development

AI has reshaped how teams work, amplifying both their strengths and weaknesses. For top developers, it delivers productivity boosts of 3–4×. But for less experienced team members lacking strong fundamentals, it can create bottlenecks. At Apollo.io, a year-long rollout involving over 250 engineers resulted in a 1.15× organizational gain – far from the "10×" claims often promoted by vendors [5]. This gap between the hype and actual outcomes highlights the complexity of integrating AI into development workflows.

Metrics from these implementations reveal an interesting trend: while teams perceive faster progress, underlying issues like debugging and review bottlenecks often offset these gains. For instance, code churn has doubled over the past year, and PR review times have surged by 91% as senior developers grapple with validating large volumes of AI-generated code [26]. Without the right safeguards, what starts as increased speed can quickly lead to mounting technical debt.

To manage AI effectively, it’s essential to treat it as part of a well-planned infrastructure – not a quick fix. This means creating context files, setting up human checkpoints for architecture and security, and adopting phased frameworks that prioritize long-term sustainability over short-term metrics [5]. As Vítor Andrade succinctly stated:

"AI did not change what good engineering practice looks like. It changed the cost of ignoring it" [1].

By focusing on these fundamentals, teams can ensure that accelerated development doesn’t come at the cost of quality. For example, the Variable-Velocity Engine incorporates these principles at every stage, balancing strategic debt, scheduled refactoring, and system hardening. Structured assessments give founders clear insights into how AI impacts both acceleration and risks, while the Principal Council ensures that speed aligns with maintainability and team cohesion.

Throughout this guide, one thing has become clear: AI is not a replacement but an amplifier. It enhances productivity when paired with strong engineering practices. The startups that succeed will be the ones that harness AI thoughtfully, building systems, documentation, and review processes that transform short-term gains into lasting advantages.

FAQs

How do I know if AI is actually speeding us up?

To figure out if AI is genuinely making a difference in productivity, you need to look at measurable metrics like cycle time, code review duration, or task completion time. It’s easy to feel like things are moving faster, but studies show that perceptions of speed don’t always match reality.

The best approach is to gather data both before and after adopting AI tools. This gives you a clearer view of the actual impact. Controlled experiments have shown that AI can improve efficiency, but the results often depend on factors like the team’s level of experience and the workflows already in place.

How can juniors use AI without getting worse at debugging?

Junior developers can make the most of AI by viewing it as a helpful assistant, not a substitute for their own reasoning and problem-solving. It’s essential to carefully assess AI-generated suggestions, double-check the code on their own, and prioritize learning the core concepts behind the solutions. To keep their debugging skills sharp, they should regularly practice reading stack traces, manually reproducing bugs, and use AI as a learning companion – asking it questions about its outputs and digging deeper into the causes of issues.

What guardrails prevent AI code from creating tech debt?

Setting up safeguards to prevent AI-generated code from contributing to tech debt involves a few critical steps. First, it’s important to establish clear guidelines for how AI tools should be used responsibly. These guidelines act as a framework to ensure AI output aligns with long-term development goals.

Next, focus on technical debt management by incorporating regular reviews and prioritizing clean, maintainable code. AI-generated code should be treated with the same scrutiny as human-written code, ensuring it doesn’t introduce hidden problems or shortcuts that could lead to invisible debt.

Lastly, developers need to be trained to carefully review AI-generated output. This step is crucial for catching potential issues like architectural flaws or subtle inefficiencies before they grow into larger problems. By combining these practices, teams can prevent small issues from snowballing into significant challenges down the road.

Related Blog Posts

- AI Tools That Make Development Teams More Productive (Not Replace Them)

- The AI Developer Productivity Trap: Why Your Team is Actually Slower Now

- GitHub Copilot Saved Us 200 Hours: Then Cost Us 2000 Hours in Bug Fixes

- Your Team Ships 2x More Pull Requests Since Adopting AI. Your Bug Count Also Doubled.

Leave a Reply