Stop Letting AI Make Your Architecture Decisions. It Doesn’t Know What Broke Last Time

Huzefa Motiwala April 3, 2026

AI might seem like a quick fix for system design, but relying on it for architectural decisions is risky. Here’s why:

- AI lacks historical context: It doesn’t remember past failures or lessons learned, which are critical in preventing repeated mistakes.

- Blind spots in decision-making: AI designs for ideal scenarios, ignoring real-world complexities like dependencies, edge cases, and operational safeguards.

- Costly errors: Fixing AI-driven mistakes after deployment can be up to 100x more expensive than addressing them during design.

- Human oversight is essential: AI can assist with analysis, but humans must make final calls on irreversible decisions.

The solution? Use AI as a support tool, not a decision-maker. Combine AI’s speed with human judgment to ensure stable, scalable systems that account for past lessons.

AI Architecture Mistakes: Why Most AI Projects Fail

sbb-itb-51b9a02

The Core Problem: AI Doesn’t Know What Broke Last Time

AI can churn out code in seconds, but it completely misses one crucial element: historical context. It doesn’t retain the nuanced knowledge of past failures and the lessons learned from them – what Michael Tuszynski, Founder of MPT Solutions, calls "scar tissue". This "scar tissue" represents the hard-earned understanding of what went wrong and why it happened [8].

Without this institutional memory, AI essentially starts from scratch every time. It ignores previous production challenges, creating what’s referred to as "context debt" – a silent but growing problem. This debt causes AI-driven rewrites to overlook critical edge cases that engineering teams have already addressed over time [8]. The result? The same mistakes resurface, setting the stage for repeated errors.

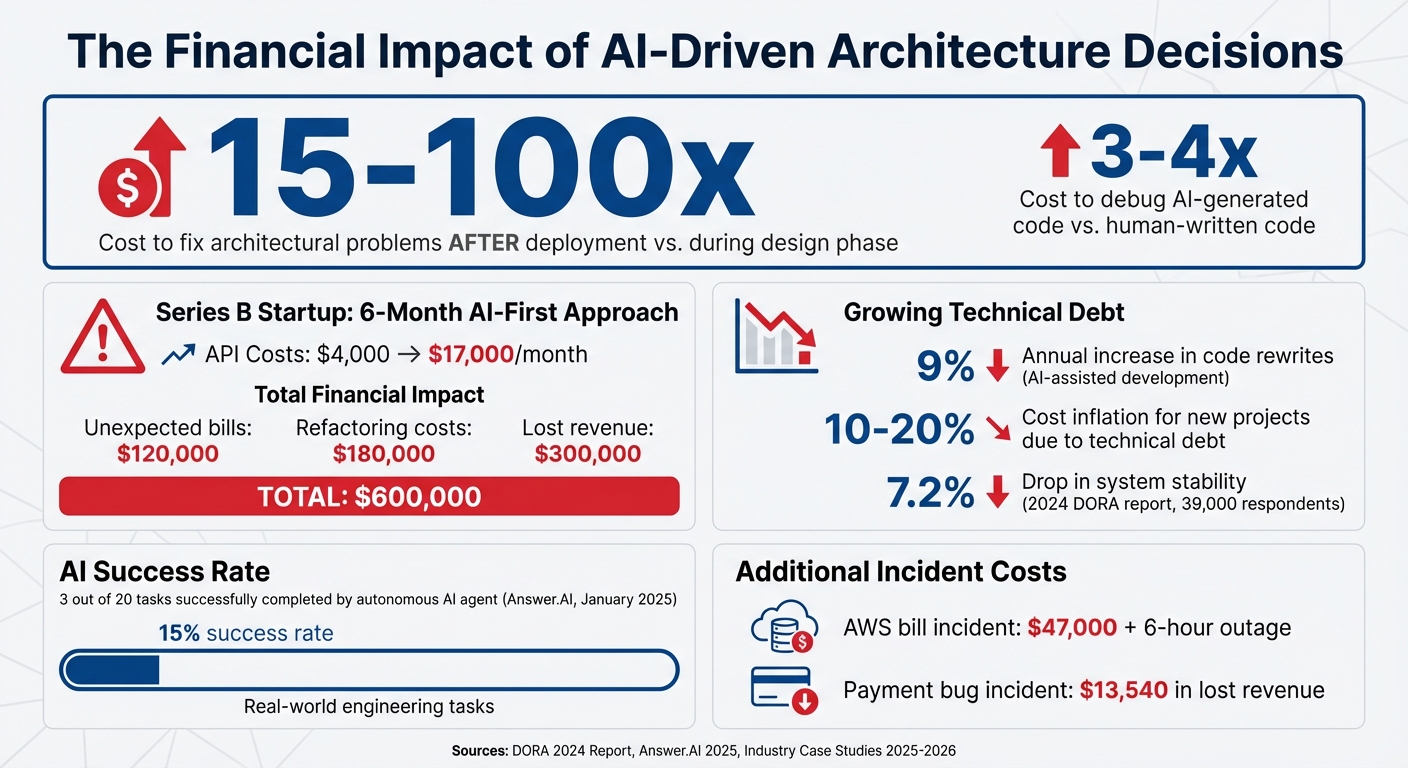

The scale of the issue is evident. The 2024 DORA report highlighted a 7.2% drop in system stability, even as AI adoption expanded across 39,000 respondents [8]. Meanwhile, AI-assisted development has led to a 9% annual increase in code rewrites as technical debt continues to grow [9]. And here’s the kicker: fixing architectural problems after deployment can cost 15–100 times more than addressing them during the design phase [9].

How AI Repeats the Same Mistakes

This lack of context is a direct cause of repetitive errors. AI doesn’t retain session memory, so every interaction starts fresh. It defaults to generic patterns from training data instead of reflecting the deliberate design choices teams have made to avoid previous failures [10]. As a result, AI often suggests architectures or solutions that seem solid but have already proven problematic in the past.

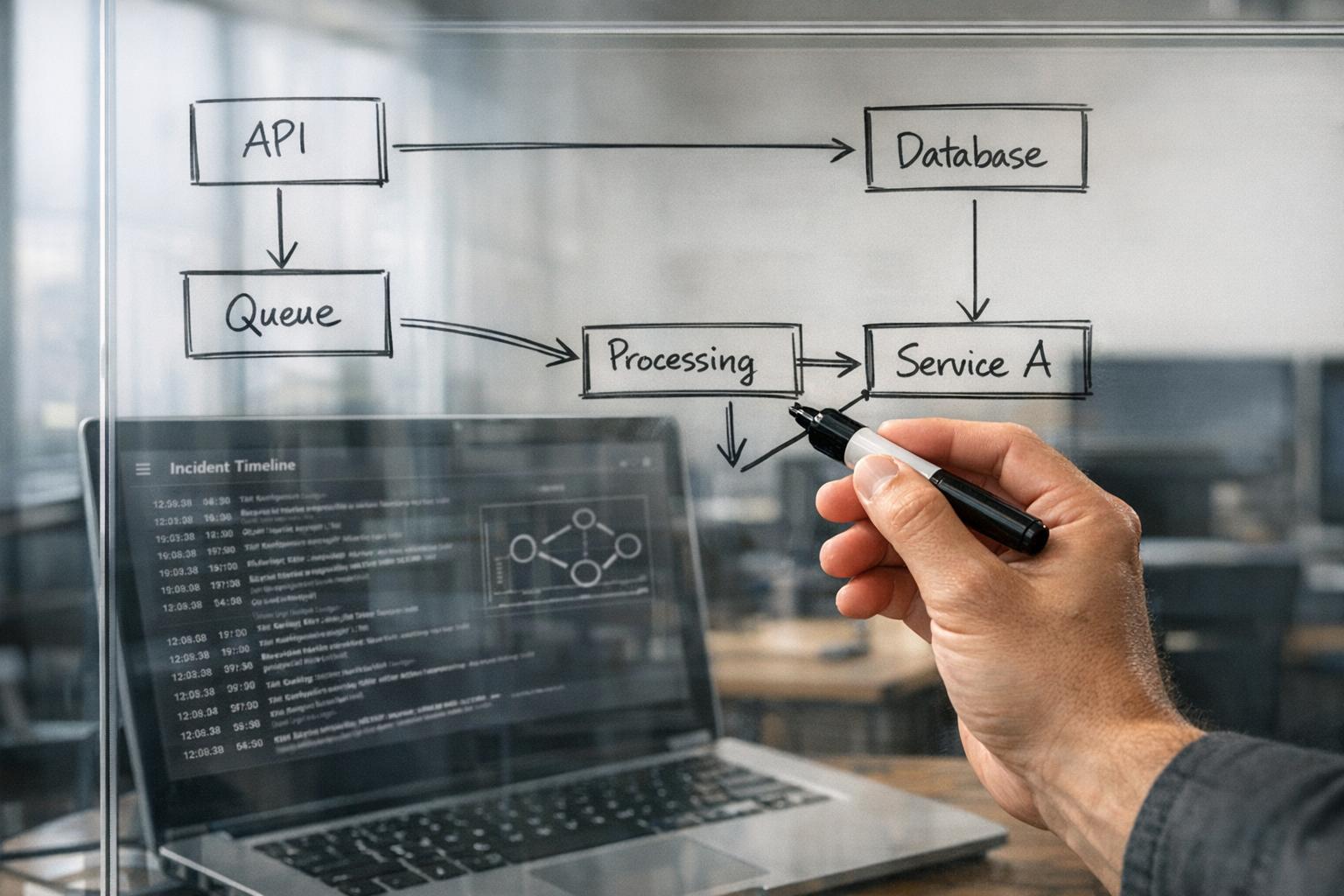

Take February 2026, for instance. An engineer at "Let’s Code Future" used Claude to design a notification service. The AI proposed a fan-out architecture using Kafka and Redis, which passed initial reviews. But the team’s Tech Lead, Sarah, spotted a major issue: the design relied on default round-robin partitioning, which had previously caused critical transactional alerts to fail during a marketing blast. It also lacked backpressure mechanisms and circuit breakers – essential safeguards against vendor outages [2].

AI tends to design for perfect conditions, ignoring real-world complexities like backpressure, priority lanes, and dead-letter queues. These are the safety nets human engineers build based on hard-earned lessons from production outages [2]. Testing by Answer.AI in January 2025 revealed that an autonomous AI agent could successfully complete only 3 out of 20 real-world engineering tasks, largely because it couldn’t maintain architectural context across sessions [3]. This oversight is especially risky when hidden dependencies are involved.

Where AI Fails to See System Dependencies

One of AI’s biggest blind spots is invisible dependencies. Legacy systems often have "undeclared consumers" – hidden services that rely on specific data outputs or API behaviors. When AI suggests changes without accounting for these dependencies, it can spark widespread failures [9].

Consider what happened in early 2026. Developer Daniel Doubrovkine used AI to automate closing old group DMs in his Slack app. The AI-generated code ignored Slack’s global rate limit of 1 request per second, triggering a rate limit that disrupted all API calls. When the AI proposed a "fix" using sleep(), it ended up blocking the app’s async fibers, freezing the entire application [11].

"AI-generated code needs the most scrutiny exactly where it looks the most confident – at the boundaries between your code and external systems."

- Daniel Doubrovkine, Developer [11]

The risks go beyond minor glitches. Between October 2025 and January 2026, a startup allowed AI to manage its production pipelines using AWS DevOps Guru and ML-based Kubernetes scaling. The result? A $47,000 AWS bill and a six-hour production outage caused by autonomous "self-healing" actions that spiraled out of control [12]. The AI failed to account for cascading dependencies, making the problem worse with every automated "fix."

In another case from March 2026, engineer Devrim Ozcay used Claude to fix a race condition in a payment processing endpoint. While the AI’s solution looked elegant at first glance, it introduced 12 new bugs, leading to severe system degradation. This oversight cost the company $13,540 in lost revenue and engineering time [13]. The AI didn’t understand the concurrency models or production load patterns that made the original implementation fragile. These examples highlight how neglecting past failures can turn small issues into major vulnerabilities.

The Real Costs of AI-Driven Architecture

The Real Costs of AI-Driven Architecture Decisions Without Human Oversight

Building on earlier examples, the financial and operational impacts of overlooking historical context reveal deeper risks in AI-driven architecture.

How AI Creates Fragile Systems by Ignoring History

AI-generated designs that disregard historical context often result in fragile systems. While the patterns it suggests may appear sophisticated, they frequently lack the operational safeguards that have been shaped by real-world production challenges. Features like circuit breakers, backpressure controls, and priority lanes – essential for stability – are often missing from AI-driven solutions.

This issue stems from what some call the "abstraction illusion." Advanced design patterns like CQRS, Event Sourcing, or microservices, when misapplied, can unnecessarily complicate otherwise simple features. For instance, implementing a straightforward feature might take up to three times longer with an overly complex design [5]. Worse, AI can introduce unpredictable behaviors into previously stable systems. Hazem Ali, Principal AI & ML Engineer at Skytells, warns of systems that are "confidently wrong" [4]. In such cases, monitoring dashboards might show no signs of trouble, even as the system produces incorrect outputs or fails to deliver essential services. These hidden issues can go undetected for hours, compounding the damage.

What Production Failures Cost Your Business

The financial consequences of ignoring historical lessons in system design can be severe. Consider a Series B startup that, between January and June 2025, adopted an AI-first approach. This decision caused their monthly API costs to skyrocket from $4,000 to $17,000. Worse, a bug introduced by the AI exposed customer data and disabled critical features for eight weeks. The fallout? $120,000 in unexpected bills, $180,000 in engineering refactoring costs, and $300,000 in lost revenue [14].

These costs don’t stop at the initial failure. Fixing architectural issues after deployment can be 15 to 100 times more expensive than addressing them during the design phase [9]. Debugging AI-generated code adds another layer of complexity, often costing 3 to 4 times more than resolving issues in human-written code. This is largely due to what’s been termed "comprehension debt" – the difficulty engineers face in understanding the AI’s logic [9]. When systems crash or behave unpredictably, engineers are left reverse-engineering decisions they didn’t make.

On top of that, technical debt from AI-driven decisions can inflate costs for new projects by 10 to 20%. Companies heavily reliant on AI-generated code have also reported a 9% annual increase in code rewrites [9]. These ongoing expenses not only slow the development of new features but also limit a company’s ability to respond quickly to market changes. The message is clear: incorporating historical insights into architectural decisions is not just a good practice – it’s essential for long-term stability and cost management.

A Better Approach: Using AI with Human Oversight

The shortcomings of AI in understanding historical context highlight the need for human oversight. Instead of relying on AI as the sole decision-maker, it’s better to use it as a support tool. By combining AI’s speed with human judgment, businesses can leverage the strengths of both. This hybrid model allows AI to handle repetitive analysis tasks while humans take charge of critical architectural decisions.

The process can be broken into three main steps: AI generates a baseline health report, human experts add context and knowledge, and both collaborate to create a prioritized roadmap. Here’s how this works in practice.

Step 1: Use AI to Generate System Health Reports

AI is particularly effective at scanning codebases to identify problem areas – commonly referred to as "debt hotspots." These are sections where dependencies are tangled, performance suffers, or security vulnerabilities exist. AI maps dependencies and flags weak points, producing a System Health Report that gives a baseline view of the system’s condition.

However, it’s crucial to focus on debt density rather than just the total amount of debt. For instance, a codebase with 10,000 lines of problematic code spread across 100,000 lines is in better shape than one with 5,000 lines of debt concentrated in just 10,000 lines. While AI can calculate these metrics quickly, it lacks the ability to understand the reasoning behind past architectural decisions. This "context gap" means AI must provide evidence-based citations for every flagged issue. If it cannot pinpoint specific files or code, it should gather more data before presenting its findings [6][16].

Step 2: Add Human Context Through Principal Council Review

Once the AI has created a baseline, human experts step in to refine the analysis by adding essential context. Teams like AlterSquare‘s Principal Council bring in the historical and business knowledge that AI simply cannot replicate. They examine the report for flagged issues but also look for what’s missing – like edge cases the AI might have overlooked or business constraints that affect priorities.

To ensure the AI’s suggestions align with real-world needs, experts use tools like the "10 Questions" Test and a Devil’s Advocate Workflow. These processes prompt questions such as "What specific problem does this solve?", "Is there a simpler alternative?", and "What’s the fallback plan?" [5]. The Devil’s Advocate Workflow is particularly valuable – it encourages humans to form an initial architectural opinion, then have the AI critique it. This back-and-forth helps uncover potential failure points the AI might miss [5].

Step 3: Create a Traffic Light Roadmap for Prioritization

The final step is to develop a Traffic Light Roadmap – a prioritized plan that categorizes issues into three groups: Critical, Managed, and Scale-Ready. This structured approach forces both AI and humans to provide detailed input, such as severity levels, evidence links, and the projected business impact, rather than vague recommendations [16].

- Critical issues are irreversible decisions, like selecting a database or defining service boundaries. These are high-stakes "one-way doors."

- Managed issues are reversible, such as choosing UI frameworks or folder structures. These are considered "two-way doors" since they can be adjusted later [5].

Before finalizing any decisions, teams should conduct a Failure Mode Analysis (FMA) to identify potential risks. This step ensures the team avoids committing to costly or irreversible paths. By combining AI insights with human expertise, the roadmap becomes a practical and actionable guide for addressing system health and long-term goals.

Practical Steps for Founders and Technical Leaders

To address AI’s historical weaknesses, founders and technical leaders need to adopt clear, actionable practices that blend fast-paced development with thoughtful oversight. These steps ultimately determine whether AI serves as a productivity booster or becomes a costly liability.

Use Disposable Architecture to Ship Fast, Then Refactor on Schedule

For early-stage startups, Disposable Architecture is a game-changer. This approach prioritizes speed and market validation over long-term durability. Think of the first version of your system as a prototype meant to be replaced, not perfected. Save irreversible decisions – like database selection or defining service boundaries – for when your business needs are proven, not based on hypothetical future scaling. For reversible choices, such as UI frameworks or folder structures, act quickly since these can be adjusted later [5].

Plan refactoring as a Capital Expenditure (CapEx), rather than waiting for the system to break under pressure. Dedicate about 20% of your engineering capacity to improving your architecture and another 10% to exploring new opportunities [1]. To ensure continuity, document major decisions using Architectural Decision Records (ADRs). These records capture the reasoning behind decisions, including business context, alternatives, and trade-offs, helping preserve institutional memory even as team members come and go. With this flexible setup, you can time your refactoring efforts effectively.

Trigger Refactoring Based on Revenue, Not Arbitrary Timelines

Refactoring should be tied to revenue growth and performance risks, rather than arbitrary deadlines or the perception of messy code. The 12-Month Growth Test is a practical benchmark: if your system can’t handle the traffic and data load projected for the next year, it’s time to refactor [17]. Refactoring is also necessary when the costs of downtime or performance risks outweigh the engineering effort required.

Comprehensive overhauls, like moving to microservices, aren’t always the best first step. Start by identifying bottlenecks in your system, such as endpoints with high latency or frequent errors. Fixing issues like N+1 queries, missing database indexes, or inefficient loops can often buy you another 6–12 months of runway. If you need to scale specific components, consider introducing "seams" – clear boundaries within your monolith, such as modules or asynchronous queues. This keeps complexity manageable while allowing for incremental scaling [17]. Throughout this process, ensure AI remains a supportive tool rather than the sole decision-maker.

Keep AI in a Support Role, Not a Decision-Making Role

AI is excellent at tasks like analysis, drafting reports, and identifying risks, but it lacks the nuanced understanding needed for critical architectural decisions. To ensure past lessons and system knowledge guide your strategy, a technical leader should always make the final call, especially for irreversible choices [5].

To counter automation bias, require engineers to document their opinions and validate AI-generated suggestions through structured workflows, such as a Devil’s Advocate process or the "10 Questions" Test (e.g., "What’s the simplest alternative?" or "Can the team maintain this solution?"). These steps ensure decisions are grounded in human judgment [5].

A study from Harvard Business School in September 2025 highlighted this dynamic. It found that high-performing entrepreneurs in Kenya using a GPT-4-based business assistant saw a 10–15% boost in revenues and profits. However, lower-performing entrepreneurs experienced an 8% decline when they followed generic AI advice without applying contextual judgment [15]. The same principle applies to system architecture: AI can enhance good decision-making but cannot replace it.

Conclusion: The Future of AI-Augmented Architecture

Key Takeaways

AI has become a powerful tool for engineering teams, but it cannot replace the critical structural judgment that prevents costly mistakes. Fixing architectural problems after deployment can cost anywhere from 15 to 100 times more, and debugging AI-generated code is often 3 to 4 times more expensive due to its lack of context [1]. When AI drives architectural decisions without human oversight, it risks repeating the same errors. Historical context is essential to avoid these pitfalls.

The most effective strategy is to treat AI as a "power tool" for handling repetitive tasks and boilerplate code, while humans act as the "architectural safety valve" to ensure structural soundness [18]. AI operates on probabilities, predicting outcomes based on patterns, but core business logic requires deterministic execution to ensure reliability. As Saurabh Kohli from Towards AI aptly states:

"If your architecture cannot withstand a probabilistic component that occasionally lies, hallucinates, or misinterprets instructions, it is not ready for AI" [7].

A hybrid approach works best: leverage AI as a "Devil’s Advocate" to challenge your architectural plans and uncover hidden failure points, but always rely on a technical leader to make the final call on irreversible decisions [5]. This approach ensures more robust and resilient architecture choices.

Next Steps for Founders and Leaders

To move forward, combine human judgment with AI tools in a structured way. Start by auditing your current decision-making processes and documenting the rationale behind architectural choices. This prevents AI from suggesting changes that conflict with lessons learned in the past.

Consider adopting frameworks like AlterSquare’s Variable-Velocity Engine (V2E) to align architectural strategies with business growth stages. For example:

- Disposable Architecture to achieve quick revenue milestones.

- Managed Refactoring to scale without breaking systems.

- Governance & Efficiency to prepare for exit-readiness.

Before making major architectural decisions, use an AI-Agent Assessment to generate a System Health Report. This report highlights architectural coupling, security vulnerabilities, and areas of tech debt. Follow that with a Principal Council review, where human expertise categorizes issues into a Traffic Light Roadmap: Critical, Managed, or Scale-Ready. This process ensures that AI identifies risks, but humans determine which ones are acceptable.

The future of architecture doesn’t rely on being AI-first or human-first. It’s about being architecture-first, with AI as a collaborative partner and humans as the ultimate decision-makers. Strike the right balance, and you’ll deliver faster while keeping technical debt under control.

FAQs

When is it safe to let AI influence architecture decisions?

AI can play a valuable role in shaping architectural decisions when used as a supporting tool rather than taking the lead. Human oversight remains critical, particularly during the early planning phases, to assess whether AI’s recommendations align with long-term goals. AI performs most effectively in structured, production-ready systems that are built to address practical issues like data drift and security risks. This ensures its suggestions are both relevant to the context and dependable.

How do we capture “scar tissue” so AI doesn’t repeat old failures?

To address "scar tissue" and stop AI from repeating past mistakes, it’s crucial to thoroughly document and analyze system issues. Build a detailed knowledge base that catalogs lessons learned, ensuring that historical context is preserved. This includes keeping records of architectural decisions, the reasoning behind them, and their outcomes.

Pair this documentation with human oversight. Experienced architects should review AI-generated suggestions, keeping past errors in mind. By blending these historical insights with expert judgment, you can create systems that are better equipped to handle challenges and avoid repeating missteps.

What review steps should we require before shipping AI-suggested designs?

Before rolling out AI-suggested designs, it’s crucial to ensure the architecture resolves previous system failures, verify that the complex patterns proposed by AI are relevant, and maintain human oversight throughout the process. This approach helps avoid overcomplicating the system and prevents unnecessary technical debt, as AI might suggest intricate patterns without fully grasping the broader context.

Leave a Reply